- Home

- Laptops

- Laptops Reviews

- Asus Strix Radeon R9 Fury DC3 4G Review: AMD's Big Bet Almost Pays Off

Asus Strix Radeon R9 Fury DC3 4G Review: AMD's Big Bet Almost Pays Off

AMD's exotic Radeon R9 Fury series was unveiled with much fanfare a short while ago. It's no secret that AMD's entire product portfolio has been pretty stagnant for years now, but this was the company's - if not the entire industry's - most interesting announcement in a long time. While most were expecting one or two launches, AMD showed off four products: the top-end Radeon R9 Fury X with its industry-first integrated liquid cooler, an air-cooled equivalent called Radeon R9 Fury, a compact variant for small-form-factor PCs called the Radeon R9 Fury Nano, and an unnamed dual-GPU monster that will not be available for some time yet.

The Fury X was the first to launch internationally, followed by the less expensive Fury a few weeks later. However, the latter card has arrived first in India. Notably, board partners such as Asus and XFX will not be able to customise their Fury X cards with different coolers, but they can with the Fury. As a cost-sensitive market with relatively fewer enthusiasts, perhaps AMD felt that the water-cooled Fury X would not have much appeal here.

With a Fiji-based card finally in our hands, we can explore AMD's most significant launch in years. The company hasn't been able to release a major GPU refresh for a long time but it has made one very, very important leap with this generation: 3D-stacked high-bandwidth memory (HBM); the successor to GDDR5. The graphics card's RAM banks are not arrayed around the GPU, but are instead implemented directly on the GPU die, almost like a gigantic processor cache.

High-bandwidth 3D stacked memory

Obviously, the huge story here is that AMD is the first to market with stacked HBM on the GPU die. This is a phenomenal technical and technological achievement - it has taken the better part of a decade of research and development, not to mention massive investments, to pull this off. This is the result of AMD being bold and taking a huge step. Despite its persistent financial struggles in the face of a dwindling overall PC market, AMD evidently remained committed to this project all along in the hope that it would lead to a massive turnaround. The Radeon R9 Fury cards are the first tangible results of that long-term thinking.

Long ago, AMD engineers predicted that memory bandwidth would become a huge bottleneck for graphics cards (and computers at large). Brute-force speed increases can only scale so far before increased power consumption cancels out any benefits. Instead, AMD decided on a drastic departure which would require not only a whole new memory architecture, but also new manufacturing techniques and new supporting standards to make it materialise.

The goal was to reduce latency by using lots of low-clocked memory chips with their own pathways to the GPU; effectively a much wider bus serving them all. For the first generation of HBM, as seen here, AMD has gone with 1GHz memory on a 4,096-bit bus, compared to up to 7GHz on 128-bit, 256-bit or 384-bit buses as has been common thus far.

Sixteen traditional chips would have been required to achieve that kind of bandwidth, but placing each of them around the GPU and wiring them to it would have been impossibly inefficient. So, AMD started working on placing the memory chip dies one on top of another - a technique known as 3D NAND stacking - and bringing them physically closer to the GPU.

The end result is four stacks of four memory dies on the same substrate as the GPU itself. In order to wire all of them together, AMD had to develop what it calls an "interposer" - the physical medium through which the memory and GPU communicate. The interposer itself is a silicon chip with thousands of microscopic traces; much denser than would be possible on a circuit board. In addition, AMD uses what are called Through-Silicon Vias (TSVs), which are traces that run through the interposer and memory die stacks, connecting the higher layers without interfering with lower ones' operations.

AMD still has to contend with the realities of physics, so one big limitation here is that the 16 dies still only amount to 4GB of total memory. That's not a lot for a modern graphics card, least of all a flagship one. There's also only so far a new type of memory can improve performance if the GPU itself isn't strong enough. Finally, the whole GPU-HBM-interposer contraption is a lot more complicated to manufacture, raising costs. HBM is the sort of thing that will undoubtedly be revolutionary, but could need a generation or two to really get to that point.

The R9 Fury GPU

Isolated from its surroundings, the core GPU logic is fairly ordinary. Codenamed Fiji, this is the only new GPU in AMD's 2015 lineup - all the Radeon 3xx cards are basically just Radeon 2xx ones with minor updates. Even Fiji doesn't have any generational advantages over its 3-series siblings other than the HBM. We'll have to wait at least another generation for a leap beyond the ancient 28nm process.

Contrary to early speculation, air-cooled Fury is not identical to the liquid cooled Fury X in terms of specifications. The clock speed is reduced by a tiny fraction, from 1.05GHz to 1Ghz, and the Fury has a few execution units disabled for a total of 3,584 as opposed to 4,096. These are pretty minor cuts, but we suspect that thermals will have a bigger part in any performance difference there is between the two.

Asus hasn't overclocked this card by default - note the lack of the letters OC after DC3 in the model number - but there is some leeway for tweaking using the bundled software.

The Asus Strix Radeon R9 Fury DC3

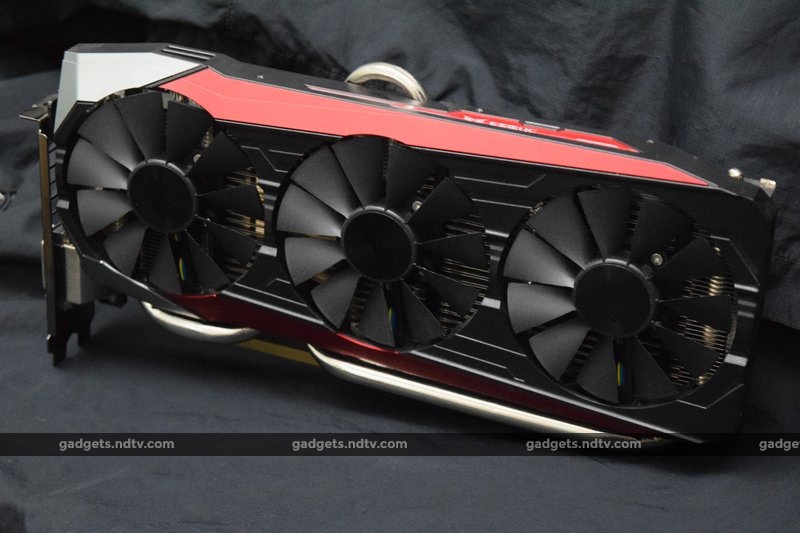

This is one massive graphics card. We've gotten used to seeing cards with small, understated coolers, thanks to general improvements in power efficiency over the past few years resulting in lower heat dissipation. All that goes out the window here - Asus has outfitted its Strix Radeon R9 Fury with a three-fan monster cooler that extends well beyond the edge of a standard ATX motherboard and rises about an inch and a half above normal.

We're a bit surprised, because one of the water-cooled Radeon R9 Fury X's biggest selling points is its compact 7.5-inch depth. It seems that more than the card itself, it's the air cooler that needs to be so huge. In fact it's as big as some of the dual-GPU cards we've come across.

You're going to need a well-ventilated cabinet since the three fans expel hot air inside your PC. Luckily they don't need to be spinning all the time - Asus' cooler can work passively when there isn't much of a load on the GPU. Parts of the massive heatpipes can be seen above and below the shroud. While Fiji is an all-new GPU, it's clear that AMD and Asus don't expect it to match Nvidia's current architectural advantages in terms of heat, noise, and power consumption.

In terms of styling, the Strix R9 Fury DC3 is a bit sedate. Even the red accents are a dull, deep shade. Three fans instead of two mean that this card can't be anthropomorphised to look like owls' faces in the same way that recent DC2OC models have been. In fact the heatsink is so large that it seems there really was no room for creative design touches at all. The only real bit of flair is a lighted red strip on the top. We didn't really like its plasticky styling or the fact that it pulses when your PC goes to sleep and cannot be controlled.

The two 8-pin PCIe power connectors stick out upwards but are masked by the overhang of the cooler shroud. Each one has an LED that lights up red if there's a loose connection or white if everything's fine. The rear of the card has a backplate for stability. On the far end, you'll find one HDMI port and three DisplayPort 1.2 video outputs along with a legacy dual-link DVI-D port (which Fury X cards lack). Our review unit came with a driver disc and a single 2x6-pin to 1x8-pin PCIe power adapter.

Performance

Our lab test bench consists of the following components:

- Intel Core i7-4770K CPU at stock speeds

- Asus Z97-Pro (Wi-Fi ac) motherboard

- 16GB of Adata 1600MHz RAM

- 120GB Kingston HyperX Fury SSD

- Cooler Master Hyper 212X CPU cooler

- Corsair RM650 power supply

- Asus PB287Q 4K monitor

- Windows 8.1

The driver used was AMD's Catalyst 15.7.1. We tested using a variety of synthetic benchmarks, built-in game benchmarks and our own run-throughs with frames per second and frame timings measured using FRAPS.

Asus's DirectCU 3 cooler might reduce noise to zero when the card is idle, but there is a noticeable hum when the fans spin up. Depending on the load though, not all three might spin. The middle one, directly above the GPU, is the first to activate.

We started with 3DMark, the longtime graphics card benchmark suite. We ran the advanced Fire Strike test three times, using optimised presets for 1080p, 1440p and 4K. The scores were 12,031, 6,502, and 3,583 respectively. At the lower end, the Strix Radeon R9 Fury was rated as better than 93 percent of all recorded scores, but at the high end, it was only better than 51 percent. For reference, the scores were all a bit lower than those recorded with an Nvidia GeForce GTX 980 Ti on exactly the same test bench.

We also ran Star Swarm, an intense 3D space battle simulation which can take advantage of AMD's optimised Mantle API. Without it, the card scored 47.71fps but with it, the score rose dramatically to 78.17fps. Unigine Valley gave us 71.5fps overall at 1080p and 45.4fps overall at 1440p. In all cases, this card lagged behind the GeForce GTX 980 Ti.

GTA V is one of the most demanding games currently available, and has fine-grained visual quality settings and a very thorough built-in benchmark. We first tried a few manual playthroughs but kept experiencing issues at 4K thanks to the 4GB VRAM limit. GTA V can easily burn through 6GB of memory so we had to reduce quite a few parameters. We settled on 16xAF, no AA, and medium settings all around. The game was still quite enjoyable, but we know it could have been better. With that done, the internal benchmark gave us an average of 31.311fps - a little underwhelming.

Far Cry 4 can also be pretty demanding, but it ran quite smoothly at 4K, with the quality set to Ultra, and SMAA and HBAO+ enabled. We recorded 38fps on average, with frame times at 26.2ms on average and 34ms at the 99th percentile.

Battlefield 4 ran reasonably well at 4K, with the quality set to Ultra. We managed to get 35fps on average, with frame times varying between 28.7ms overall and 41.5ms for the 99th percentile. Lowering the resolution to 1440p with all other settings the same resulted in a huge jump to 85fps and very tight timings of 11.8ms and 17ms respectively.

On the other hand, Crysis was utterly unplayable at 4K with the settings at Very High. Frame rates often fell down to single-digit levels and we couldn't get through enough of a level to record a meaningful sample of data. We reverted to 1440p with 8xMSAA, 8xAF and Very High quality, and were able to play at a comfortable 32fps with timings between 31ms and 42.1ms.

Verdict

We had high hopes for AMD's revolutionary new technology, especially given how badly the company needs a breakthrough. Unfortunately, that hasn't happened yet. The Radeon R9 Fury is a powerful high-end GPU, but it doesn't do anything particularly new or bring about any drastic improvement that its competition hasn't already achieved. It is best matched against the GeForce GTX 980, which is a little less than a year old but still going strong. The new GeForce GTX 980 Ti beats the Radeon R9 Fury consistently, though that option is slightly more expensive.

Having 4GB of VRAM on a graphics card of this class is definitely a handicap. We ran into a roadblock with one current AAA title, which is a good indication of what games releasing over the next few years will require in terms of hardware resources. The long-term prospects for Radeon R9 Fury card owners are not very clear at this moment.

The good news is that the HBM architecture is entirely transparent to hardware, software and the end user experience. It just works, and it's possible that things will improve over time as developers have a chance to optimise for it. Things will also become interesting as DirectX 12 starts seeing mainstream adoption. It's also always good to have options - hopefully GeForce GTX 980 cards will now see a price drop, which has been overdue for a while already.

AMD fans who want high quality at 1440p and know they aren't going to buy a 4K monitor anytime soon can definitely consider this card. It is already a little piece of history; a hugely important milestone in PC technology development. However, those who care more about practicalities such as heat dissipation and power consumption will find Nvidia's GeForce GTX 980 or 980 Ti more suitable.

Pros

- Good performance for 1440p gaming

- Silent when idling

Cons

- 4GB VRAM limitation

- Huge and unwieldy

- Runs hot, requires two 8-pin PCIe connectors

Ratings (Out of 5)

- Performance: 3.5

- Value for Money: 3

- Overall: 3.5

For the latest tech news and reviews, follow Gadgets 360 on X, Facebook, WhatsApp, Threads and Google News. For the latest videos on gadgets and tech, subscribe to our YouTube channel. If you want to know everything about top influencers, follow our in-house Who'sThat360 on Instagram and YouTube.

Related Stories

- Galaxy S24 Series

- MWC 2024

- Apple Vision Pro

- Oneplus 12

- iPhone 14

- Apple iPhone 15

- OnePlus Nord CE 3 Lite 5G

- iPhone 13

- Xiaomi 14 Pro

- Oppo Find N3

- Tecno Spark Go (2023)

- Realme V30

- Best Phones Under 25000

- Samsung Galaxy S24 Series

- Cryptocurrency

- iQoo 12

- Samsung Galaxy S24 Ultra

- Giottus

- Samsung Galaxy Z Flip 5

- Apple 'Scary Fast'

- Housefull 5

- GoPro Hero 12 Black Review

- Invincible Season 2

- JioGlass

- HD Ready TV

- Laptop Under 50000

- Smartwatch Under 10000

- Latest Mobile Phones

- Compare Phones

- Huawei Pura 70 Pro+

- Huawei Pura 70 Ultra

- Tecno Camon 30 Premier 5G

- Motorola Edge 50 Fusion

- Oppo A1i

- Oppo A1s

- Motorola Edge 50 Ultra

- Leica Leitz Phone 3

- Asus ZenBook Duo 2024 (UX8406)

- Dell Inspiron 14 Plus

- Realme Pad 2 Wi-Fi

- Redmi Pad Pro

- Cult Shock X

- Fire-Boltt Oracle

- Samsung Samsung Neo QLED 8K Smart TV QN800D

- Samsung Neo QLED 4K Smart TV (QN90D)

- Sony PlayStation 5 Slim Digital Edition

- Sony PlayStation 5 Slim

- IFB 2 Ton 3 Star Inverter Split AC (CI2432C323G1)

- Daikin 1 Ton 3 Star Inverter Split AC (FTKL35UV16W+RKL35UV16W)