Software That Allows Blending of Facial Images Across Videos

With the new system, directors will be able to fine-tune the performances in post-production, rather than on the film set.

Called FaceDirector, the system enables a director to seamlessly blend facial images from a couple of video takes to achieve the desired effect.

"It's not unheard of for a director to re-shoot a crucial scene dozens of times, even 100 or more times, until satisfied," said Markus Gross, vice president of research at Disney Research.

"That not only takes a lot of time - it also can be quite expensive. Now our research team has shown that a director can exert control over an actor's performance after the shoot with just a few takes, saving both time and money," Gross added.

FaceDirector is able to create a variety of novel, visually plausible versions of performances of actors in close-up and mid-range shots.

Moreover, the system works with normal 2D video input acquired by standard cameras, without the need for additional hardware or 3D face reconstruction.

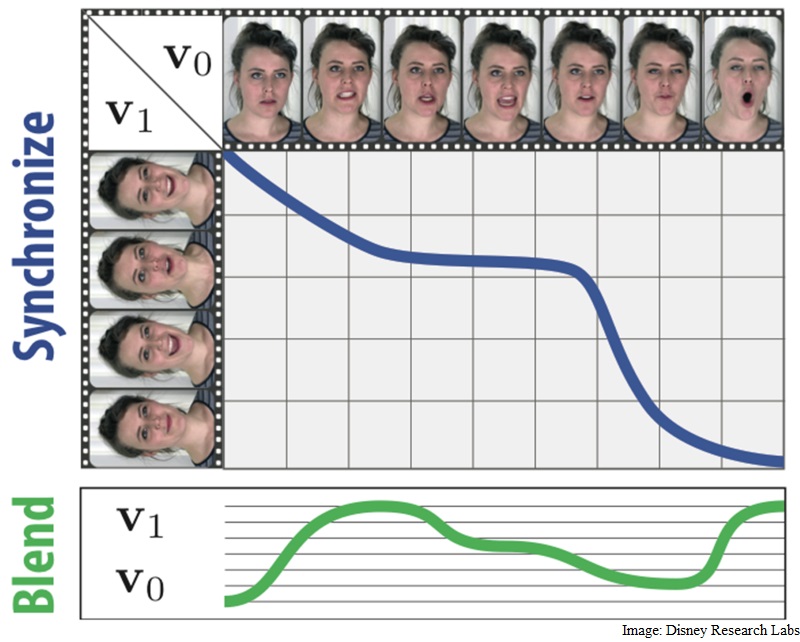

In a first, the system analyses both facial expressions and audio cues. It then identifies frames that correspond between the takes using a graph-based framework.

Once this synchronization has occurred, the system enables a director to control the performance by choosing the desired facial expressions and timing from either video, which are then blended together using facial landmarks, optical flow and compositing.

The researchers would present their findings at the ongoing International Conference on Computer Vision 2015 in Santiago, Chile.

For the latest tech news and reviews, follow Gadgets 360 on X, Facebook, WhatsApp, Threads and Google News. For the latest videos on gadgets and tech, subscribe to our YouTube channel. If you want to know everything about top influencers, follow our in-house Who'sThat360 on Instagram and YouTube.

Related Stories

- Galaxy S24 Series

- MWC 2024

- Apple Vision Pro

- Oneplus 12

- iPhone 14

- Apple iPhone 15

- OnePlus Nord CE 3 Lite 5G

- iPhone 13

- Xiaomi 14 Pro

- Oppo Find N3

- Tecno Spark Go (2023)

- Realme V30

- Best Phones Under 25000

- Samsung Galaxy S24 Series

- Cryptocurrency

- iQoo 12

- Samsung Galaxy S24 Ultra

- Giottus

- Samsung Galaxy Z Flip 5

- Apple 'Scary Fast'

- Housefull 5

- GoPro Hero 12 Black Review

- Invincible Season 2

- JioGlass

- HD Ready TV

- Laptop Under 50000

- Smartwatch Under 10000

- Latest Mobile Phones

- Compare Phones

- Huawei Pura 70 Pro+

- Huawei Pura 70 Ultra

- Tecno Camon 30 Premier 5G

- Motorola Edge 50 Fusion

- Oppo A1i

- Oppo A1s

- Motorola Edge 50 Ultra

- Leica Leitz Phone 3

- Asus ZenBook Duo 2024 (UX8406)

- Dell Inspiron 14 Plus

- Realme Pad 2 Wi-Fi

- Redmi Pad Pro

- Cult Shock X

- Fire-Boltt Oracle

- Samsung Samsung Neo QLED 8K Smart TV QN800D

- Samsung Neo QLED 4K Smart TV (QN90D)

- Sony PlayStation 5 Slim Digital Edition

- Sony PlayStation 5 Slim

- IFB 2 Ton 3 Star Inverter Split AC (CI2432C323G1)

- Daikin 1 Ton 3 Star Inverter Split AC (FTKL35UV16W+RKL35UV16W)