- Home

- Apps

- Apps Features

- Deep Learning: Teaching Computers to See Like People

Deep Learning: Teaching Computers to See Like People

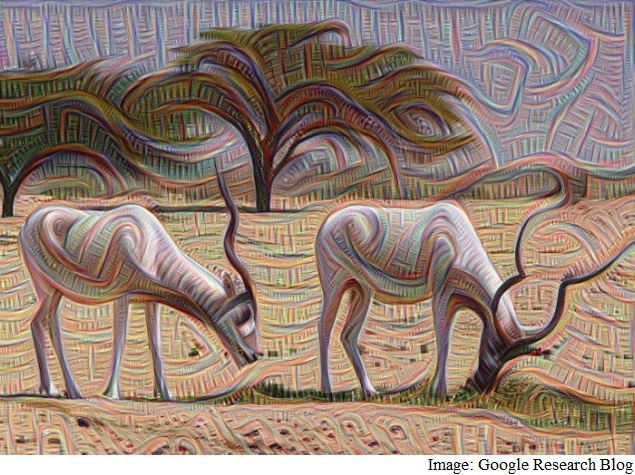

Google's Deep Dream - a visualisation tool to help people understand how neural networks work - was a viral hit but also highlighted some of the challenges with the field of image recognition. It's not enough to simply compare an image against a database and call it a day, obviously; image recognition is a complex problem that some of the biggest companies in the world are working on.

In 2014, Microsoft, Google, and Facebook all published research on different image recognition software. You're already seeing this in action in different ways - everyone here has probably been impressed by Picasa and Facebook suggesting whom to tag in photos; Google and Bing are getting better at recognising images as well. If you read the three company's research articles, you learn about how they all utilise neural networks, which carry out multiple passes over the source image to try and identify it. The basis for all this is a process that's known as deep learning.

NDTV Gadgets caught up with Omar Tayeb, the founder and CTO of augmented reality (AR) firm Blippar, who was visiting Delhi from the company's UK offices, along with the co-founder and CEO Ambarish Mitra, and learned a little bit more about how Blippar is making use of this concept for image recognition, and got a very basic understanding of how it works.

Blippar, along with other startups like Wowsome and Times Internet's Alive, uses AR mostly for marketing. Alive has a product for smart wedding cards, and other companies are also trying to use AR for magazines and newspaper ads, while e-commerce sites are starting to use it as a virtual shopping experience.

(Also see: Playing Darts With Suppandi: How Tinkle Is Going High-Tech)

This, however, is just the start of things, Mitra tells NDTV Gadgets. "Right now, when you start the Blippar app, it can't tell you about the chair in front of you, or the apple on your desk, but it'll recognise a bottle of Coke [coca-cola]," says Mitra. "Which could show a brand campaign or something like that."

In the next 5-6 months, Blippar will be launching a "visual Internet", wherein the Blippar app will be able to identify objects that aren't necessarily in its catalog, and show users relevant information about them.

"If you look at a car, even if you've never seen that model before, you'll be able to tell that it is some type of car," says Omar Tayeb, the Blippar CTO. "Blippar can't do that right now. It either needs to know the type of car already, and can recognise it, or it does not."

"That's a limitation, but there's something called Deep Learning, where, through a process of iteration, the system actually starts learning," he explains. "The machine learning means that the more the system sees, the more accurate it becomes over time. It's a little like how a baby learns - you see millions of variations of faces as a baby, and that's how you learn to recognise facial features. The system is the same way, and it becomes more intelligent as more people use it."

Essentially, this process has its basis in a concept called pattern recognition. To do this, the computer breaks down an image into several layers, and instead of trying to identify the whole image, it tries to identify individual datapoints from these sets. It's sort of like searching for words - the more search terms you enter, the more accurate your results should be, and according to Tayeb, the goal is to break the image into enough data points to be able to create a good understanding of the object in question.

"You can't have a database of all the images you need, obviously," says Tayeb. "So it's not going to be enough to identify a particular image; you need to be able to look at it and say this is a chair, and not just identify one specific chair."

Machine learning goes one step beyond pattern recognition, and tries to apply logic to grouping patterns, to more quickly and accurately identify objects. Using lots of complex mathematical formulae, computers were using machine learning to become a lot smarter, but the next step, which brings them closer to thinking in the way we do, is called deep learning.

These are also described as neural networks, which are named for neurons, because the systems are based on the central nervous systems of animals, particularly the brain. They're used to get a computer to think about problems in the same way that a person would, Tayeb explains.

"There is no cataloguing - that's not possible - so you have to be able to pull out structures. That's what the human brain does as well," he explains. "When you're seeing something, the receptors in your eyes are bringing in a huge amount of data, but it doesn't make sense by itself. Your brain has to process the 9-10 million data points that are coming in from your eyes, and see how objects are formed together, and make judgements about what the objects are, and what their properties are."

"For us, the 'brain' of the app is about feeding data - whenever you open the app, which you'll do because there's a campaign or some incentive to get a deal, you also start feeding data to the app," he adds. "And it learns from everything you see and at first, it might require manual identification. But once enough users have shown it a chair and told it that this is a chair, then the process becomes automatic. The app starts to be able to recognise chairs well enough even without intervention; and the same is true for any object. At some point, you might point to an apple and get its nutritional data, or point to a phone and be able to see where you can buy it from."

For Blippar, the goal is to convert any camera into a smart device; whether it's connected to a high end smartphone or not. "We only need a minimum of a 2-3 megapixel camera, and the 'thinking' all happens on our side, so there's no limits really, we want to be the brains of any camera," Tayeb says.

For the latest tech news and reviews, follow Gadgets 360 on X, Facebook, WhatsApp, Threads and Google News. For the latest videos on gadgets and tech, subscribe to our YouTube channel. If you want to know everything about top influencers, follow our in-house Who'sThat360 on Instagram and YouTube.

Related Stories

- AI

- iPhone 16 Leaks

- Apple Vision Pro

- Oneplus 12

- iPhone 14

- Apple iPhone 15

- OnePlus Nord CE 3 Lite 5G

- iPhone 13

- Xiaomi 14 Pro

- Oppo Find N3

- Tecno Spark Go (2023)

- Realme V30

- Best Phones Under 25000

- Samsung Galaxy S24 Series

- Cryptocurrency

- iQoo 12

- Samsung Galaxy S24 Ultra

- Giottus

- Samsung Galaxy Z Flip 5

- Apple 'Scary Fast'

- Housefull 5

- GoPro Hero 12 Black Review

- Invincible Season 2

- JioGlass

- HD Ready TV

- Laptop Under 50000

- Smartwatch Under 10000

- Latest Mobile Phones

- Compare Phones

- Realme Realme GT Neo 6

- Nokia 3210 4G

- Vivo Y18e

- Vivo Y18

- Vivo Y38 5G

- Nokia 235 4G (2024)

- Nokia 225 4G (2024)

- Nokia 215 4G (2024)

- Dell Alienware X16 R2

- Lenovo IdeaPad Pro 5i

- Apple iPad Pro 13-inch (2024) Wi-Fi

- Apple iPad Pro 13-inch (2024) Wi-Fi + Cellular

- boAt Storm Call 3

- Lava ProWatch Zn

- Samsung Samsung Neo QLED 8K Smart TV QN800D

- Samsung Neo QLED 4K Smart TV (QN90D)

- Sony PlayStation 5 Slim Digital Edition

- Sony PlayStation 5 Slim

- LG 1.5 Ton 3 Star Inverter Window AC (TW-Q18WUXA)

- Godrej 1.1 Ton 3 Star Inverter Split AC (SIC 13DTC3 WWB)